With the increase in the speed and data rate of digital data streams, the loss of PCB traces is becoming increasingly a bottleneck. Signal integrity can be improved by transmitting signals closer to the ASIC through co-packaged optics. For centuries, the speed of communication has been limited by the medium through which information is disseminated. Walking messengers, horseback messengers, letters across the ocean. Distance and transportation define the limits. The invention of the telegraph and the telephone changed this situation. Once the medium becomes almost instantaneous, the constraints shift from transmission to interpretation. How fast can a Morse operator decode messages, or how fast can oral information be understood? For most of the computing era, interconnection technology has largely been overlooked. The processing capacity has grown so rapidly that copper traces, backplanes and printed circuit board (PCB) routing are considered "fast enough" inside the equipment. The traditional modular system is built around copper backplanes and electrical interconnections, as shown in Figure 1.

Nowadays, this assumption no longer holds true. As artificial intelligence systems and hyperscale architectures continue to drive up bandwidth demands, the medium has once again become the decisive factor. Signal loss, high energy consumption regulation and density limitations mean that PCBS are no longer the natural channels for the fastest communication. The mode of data transmission has once again become the core of the speed of information sharing. This bottleneck is particularly evident in expanding GPU fabrics, hyperscale switching environments, and the AI clusters now built in the largest data centers. At these bandwidth levels, interconnection is no longer a detail at the design edge. It becomes crucial. Power consumption, signal integrity, density and latency are all affected by the way bits move between chips. This is the background for the emergence of co-packaged optical technology. Sometimes described as revolutionary, this transformation should be regarded more as gradual. Co-packaged optical technology is not a sudden breakthrough from the past; They represent the next step towards high-speed connectivity. This trajectory is driven by the same engineering pressure that has shaped the interconnection design for decades.

PCB and backplane: The initial road

For most of the history of modern electronics, PCBS and copper backplanes have formed the backbone of modular electronic systems. Backplane connectors, copper traces and electrical signal transmission enable architects to build large, maintainable platforms where processors, line cards and subsystems can communicate efficiently within the rack. Telecommunication routers and switches expand very well using these copper-based building blocks. Connector technology has developed in tandem with silicon wafers, increasing pin density, improving impedance control, and enhancing electrical performance generation after generation. For many years, copper has provided engineers with what they need: a familiar, easy-to-manufacture, reliable and cost-effective medium. But the reality of high-speed electrical expansion is that each generation demands more physical performance. Ultimately, cracks began to appear on the back plate.

Copper extensions dominate the system

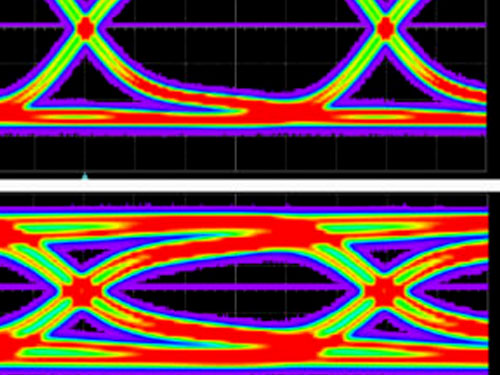

With the continuous increase in data rates, the fundamental limitations of electrical transmission are becoming increasingly difficult to ignore. Loss increases rapidly with frequency, reflection and discontinuity become more harmful, and crosstalk margin decreases. The physical realities of PCB design - trace length, vias, connector conversions and routing constraints - are beginning to dominate the link budget. As the data rate increases, the combined effect of loss and jitter begins to close the signal eye, as shown in Figure 2.

At lower rates, these problems can usually be managed through reasonable layout practices and moderate balancing. However, as the signal rate continues to rise, system complexity is more often used to protect data rather than to transmit it. Each new speed milestone requires additional equalizers, retimers and more complex encoding techniques. Although these technologies are effective, they will introduce additional costs. More electricity is used to hold the signal, and more silicon wafers are used to transmit bits rather than perform calculations. In large-scale multi-rack systems, copper cables have also become a physical limitation. The limitations of weight, volume and transmission distance will soon be exposed. This was one of the initial driving forces that brought optical fibers into view. Copper cables are difficult to support the transmission distance required across multiple racks in telecommunication routers, while optical fibers have provided a practical solution long before people began to talk about co-encapsulated photonics technology.

Extend the service life of copper cables

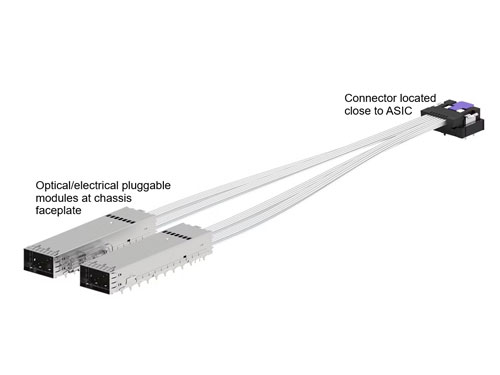

The industry's first major response measures were very straightforward. If long electrical paths are a problem, shorten them. Instead of transmitting high-speed signals from the edge of the board, the connector is moved near the ASIC to reduce the electrical transmission distance. The on-board connector and near-package connector architecture shorten the electrical path length and enhance signal integrity. This step increases the margin and simultaneously extends the service life of the copper cable. But it also introduces new challenges. Bringing the connector closer to the silicon wafer requires stricter mechanical tolerances. The assembly process is more complex and its maintainability is reduced. Every improvement is accompanied by trade-offs, but innovation continues. The next step is to completely bypass the PCB for the fastest channels.

Chip proximity wiring

As PCB routing becomes difficult to expand, many designers have begun to route high-speed signals to copper cable assemblies adjacent to silicon wafers. Dual-axis cables and similar high-performance cable technologies can outperform long PCB traces at high data rates, offering better loss characteristics and longer transmission distances. The proximity wiring of the chip, as shown in Figure 3, enables designers to break free from the limitations of long board routing. Instead of forcing copper cables to span the entire PCB or backplane, signals are transmitted through a more controlled medium. As more high-speed channels move from PCBS into cable assemblies, the volume and complexity of copper cables will increase accordingly.

Unfortunately, this is still an electrical solution. Although the channel performance has been improved, the basic overhead of electrical signal transmission has not been eliminated. Retimers, encoding complexity and power consumption remain components of the toolbox. However, as the system's demand for higher bandwidth density continues, the adjacent cabling of chips is confronted with the same problem: How far can the limit of copper cables be pushed so that the architecture is no longer dominated by signal conditioning?

Co-encapsulated copper

Innovation in copper wire has not been confined to cables near chips. The roadmap continues to move closer, even leading high-speed electrical connections directly out from the chip substrate. Co-packaged copper technology further shortens the trace length and supports higher I/O density. However, at this scale, the encapsulation environment becomes crowded. Thermal limitations intensify, mechanical integration becomes more refined, and the density of connectors approaches the actual limit. Although the copper wire can still be expanded, every time a new speed obstacle is encountered, the remaining amount is shrinking. Copper wire remains crucial for power transmission and many short-distance interconnections. Electrical innovation continues, and engineers are constantly extending the service life of copper wires. The parallel development of copper wire and optics has its reasons. Engineers have recognized that although the expansion space of copper wire has been shrinking with the introduction of each generation of signaling, it remains crucial.

When optics first proved its value

Optical technology was not incorporated into system design because engineers wanted something unusual. Optical fibers were initially adopted because copper wires could not meet the requirements of transmission distance and scalability. Multi-rack telecommunications routers are typical examples of the early adoption of optical fibers. In these systems, copper cables have become overly large and have limited transmission distances, while optical fibers have achieved larger and more scalable architectures that copper wires cannot actually support. Since then, optics has begun to get closer to silicon chips. On-board optics reduces the electrical transmission distance within the dense line card system. Even so, optics is still generally regarded as a special tool and is only used when the transmission distance of copper cables is insufficient. However, with the surging demand for bandwidth, optics has gradually shifted from a specialized field to an inevitable choice. At some point, the power consumed by the system for cleaning electrical signals even exceeded that of data transmission itself. At this point, optics became inevitable, not because the copper cables failed, but because physics had reached a stage where trade-offs were no longer reasonable.

When the copper system approaches its performance limit, the question is no longer how much complexity the electrical link can add, but whether different media can expand more naturally. The expansion methods of optical fibers and copper are completely different. Although the transmission distance of high-speed dual-axis copper cables is limited to a few meters, optical fibers can usually support transmission over hundreds or thousands of meters. In optical media, attenuation and dispersion behave differently, and photonics offers expansion opportunities that electrical signals cannot achieve efficiently. The telecommunications industry has long benefited from the use of wavelength division multiplexing to transmit multiple channels over a single optical fiber. This enables the increase in bandwidth to be independent of the physical medium itself. In these systems, expanding bandwidth usually only requires changes at the sending and receiving endpoints. Similar principles can be applied to data center photonics as optical engines approach chips. Once the optical devices are close enough to eliminate long-distance electrical paths, most of the re-timing and coding overhead will disappear. This is a powerful reason driving the industry to shift its focus to co-packaged optics.

What is co-packaged optics?

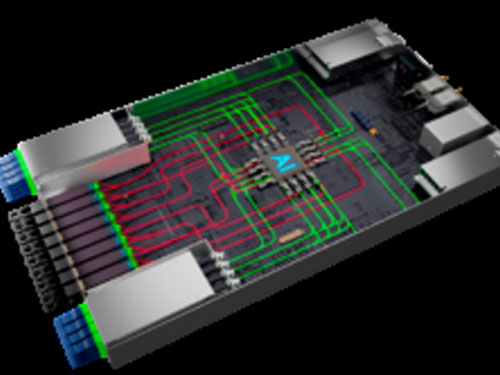

Traditionally, optical conversion is located in pluggable modules at the edge of the system. The ASIC conducts electrical communication through PCB traces, while optical components only appear on the front panel. Co-encapsulated optics has changed this boundary. From a physical structure perspective, this architectural transformation is clearer. Figure 4 shows how the optical engine moves close to the ASIC package; The optical fiber is directly led out from the substrate.

The CPO pulls the electrical to optical interface into the package environment. Rather than converting signals at the system edge, the light emission and reception functions are located near the ASIC, just a few millimeters away. The benefits are significant. The electrical path is shortened, the retiming and regulation overhead can be reduced, and the significant silicon wafers and power consumption that were once used to drive long-distance copper wires can be eliminated. Co-packaged optics should not be understood as a new function, but rather as an architectural shift of the location where the transformation occurs.

When will the CPO enter the discussion?

Most engineers do not accept optical technology because it is a fashionable move. The adoption of optical technology occurs when electrical connections cannot be expanded efficiently. When the copper wire connection collapses in each iteration and escape wiring becomes a package limitation, co-packaged optical technology becomes relevant. When the bandwidth per rack exceeds the range that the front panel optical technology can support and the power consumption per bit becomes a strict architectural constraint, it will also come up for discussion. In most cases, CPO is not intended to replace copper wire everywhere. On the contrary, it applies optical technology at the edge of the chip, where distance, density and power consumption converge.

Engineering building blocks and Open challenges

Several key elements are required to achieve encapsulated optics. Optical chips and photonic wafers must be closely integrated with the substrate. An external laser source is required because lasers usually remain detached from the sheet to improve thermal stability and long-term reliability. Light can still be efficiently transmitted to the optical engine through a dedicated optical fiber path. The roadmap for the next-generation switching chips and GPU routing has pointed to more channels per package. Connectors must become detachable and maintainable, rather than vulnerable components that are permanently connected. Fiber to chip connection remains one of the most challenging problems. It is no easy task to connect hundreds of optical fibers to a compact substrate in a manufacturable and detachable way. CPO is technically feasible, but the expansion of large-scale deployment is a huge obstacle.

Who will be the first to adopt encapsulated optics?

The early adoption of co-packaged optical technology is most likely to come from hyperscale computing companies and artificial intelligence infrastructure builders, where bandwidth density and energy efficiency are of critical importance. Large-scale training clusters, switching networks and delay-sensitive systems will be the first to prove the rationality of co-packaged optical architectures. In these environments, even a slight reduction in power consumption per bit or latency can be a significant system-level benefit when scaled to thousands of interconnected devices. Once optical fibers can be directly obtained from chips, this technology will have broad application prospects. The remaining obstacle is the maturity of the ecosystem. The industry must expand from manufacturing thousands of complex photonic components to producing hundreds of thousands of systems based on co-packaged optics.

Conclusion: The next step at the edge of the chip

Co-packaged optical technology is not a sudden revolution but the next step for high-speed connection within the package. Copper wire will remain crucial, especially in power supply and short-distance connections, but where electrical overhead is no longer reasonable, optical technology will become inevitable. The future is hybrid. Copper cables and optical fibers will coexist, each playing a role in the most suitable places for their engineering applications. As system architects continue their arduous journey of migrating bandwidth to silicon chips, they will each have the greatest engineering significance.